This post documents my automata-theoretic approach to solving regex crosswords, which is unlike other approaches out there (see Related work below). The biggest limitation of this computer science theory approach is that it only works for truly regular regexes (so no capture groups, look-aheads, etc). I have implemented it in Java using the dk.brics.automaton library. Automata-theoretic approach

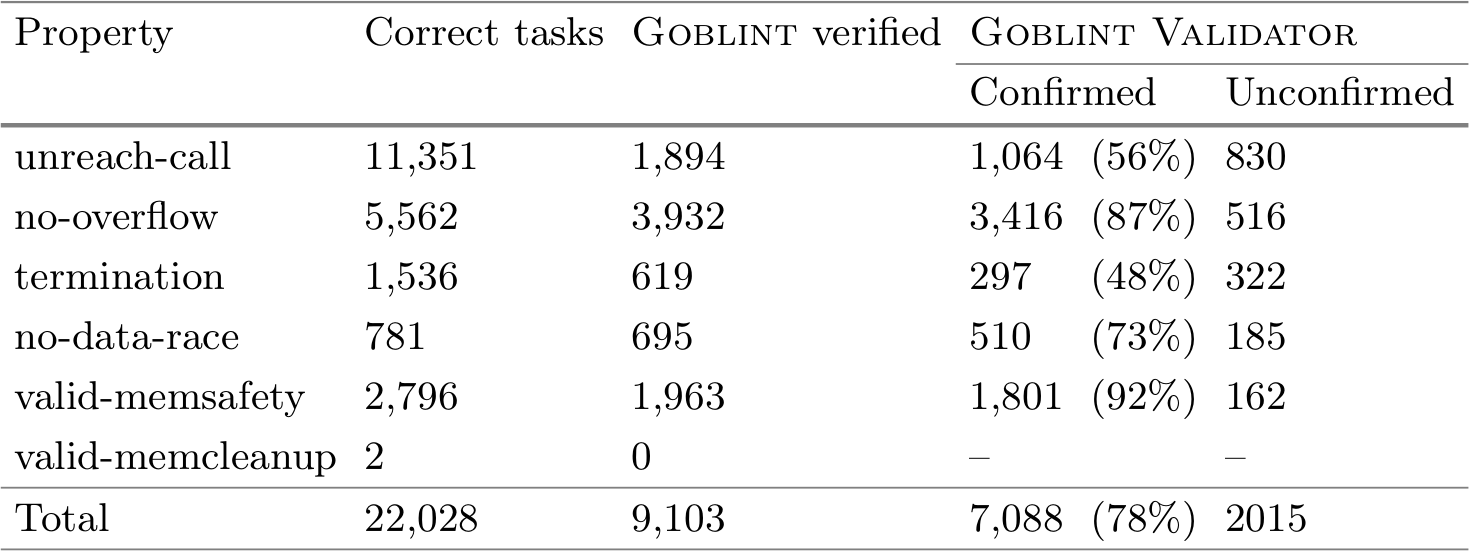

Let a rectangular regex crossword with \(h\) rows and \(w\) columns be defined by:

- row regexes \(R_0, R_1, \dots, R_{h-1}\) (matching left-to-right),

- column regexes \(C_0, C_1, \dots, C_{w-1}\) (matching top-to-bottom).

Let’s view the \(w \times h\) rectangle as a string of length \(w \times h\) where the rows have been concatenated (i.e., row-major order).

Example

Consider Intermediate: Always remember from regexcrossword.com as an example:

\[\begin{array} {|r|c|c|c|}\hline & \texttt{UB|IE|AW} & \texttt{[TUBE]*} & \texttt{[BORF].} \\ \hline \texttt{[NOTAD]*} & ? & ? & ? \\ \hline \texttt{WEL|BAL|EAR} & ? & ? & ? \\ \hline \end{array}\] Row automata

First, construct a width automaton \(W\) which accepts all strings of length \(w\). This automaton corresponds to the regex .{w}.

Then, for each row regex \(R_i\) construct a row automaton \(R_i'\) as follows:

\[R_i' = W^{i} \circ (R_i \cap W) \circ W^{w - i - 1},\] where

- \(\circ\) is the binary operator for concatenating two automata,

- exponentation self-concatenates indicated number of copies of the automaton (power 0 gives the automaton whose language contains only the empty string),

- \(\cap\) is the binary operator for intersection of two automata.

Example

For the above example, the automata are the following.

\(W\) is the width automaton corresponding to the regex .{3}:

graph LR

start:::hidden

w0(($$w_0$$))

w1(($$w_1$$))

w2(($$w_2$$))

w3((($$w_3$$)))

start-->w0-->|.|w1-->|.|w2-->|.|w3

classDef hidden display: none;

For brevity, a single character regex is used to describe the possible transitions, as opposed parallel transitions for each character in the alphabet. Dead/trap states and transitions are also omitted.

- \(R_0\) is the automaton corresponding to the regex

[NOTAD]*: graph LR

start:::hidden

r0((($$r_0$$)))

start-->r0-->|"[NOTAD]"|r0

classDef hidden display: none;

For brevity, a single character regex is used to describe the possible transitions, as opposed to 5 self-loops.

\(R_0' = (R_0 \cap W) \circ W\) is the row automaton (which happens to correspond to the regex [NOTAD]{3}.{3}):

graph LR

start:::hidden

q0(( ))

q1(( ))

q2(( ))

q3(( ))

q4(( ))

q5(( ))

q6((( )))

start-->q0-->|"[NOTAD]"|q1-->|"[NOTAD]"|q2-->|"[NOTAD]"|q3-->|.|q4-->|.|q5-->|.|q6

classDef hidden display: none;

- \(R_1\) is the automaton corresponding to the regex

WEL|BAL|EAR: graph LR

start:::hidden

r0(($$r_0$$))

r1(($$r_1$$))

r2(($$r_2$$))

r3(($$r_3$$))

r4(($$r_4$$))

r5(($$r_5$$))

r6(($$r_6$$))

r7((($$r_7$$)))

start-->r0-->|W|r1-->|E|r2-->|L|r7

r0-->|B|r3-->|A|r4-->|L|r7

r0-->|E|r5-->|A|r6-->|R|r7

classDef hidden display: none;

(For symmetry, this has not been minimized.)

\(R_1' = W \circ (R_1 \cap W)\) is the row automaton (which happens to correspond to the regex .{3}(WEL|BAL|EAR)):

graph LR

start:::hidden

w0(( ))

w1(( ))

w2(( ))

w3(( ))

start-->w0-->|.|w1-->|.|w2-->|.|w3

r1(( ))

r2(( ))

r3(( ))

r4(( ))

r5(( ))

r6(( ))

r7((( )))

w3-->|W|r1-->|E|r2-->|L|r7

w3-->|B|r3-->|A|r4-->|L|r7

w3-->|E|r5-->|A|r6-->|R|r7

classDef hidden display: none;

Column automata

While the construction of automata for each row regex is rather intuitive, it’s significantly more involved for column regexes. That is because the characters in the row-major string that make up the subsequence which needs to match the column regex are not consequtive. Nevertheless, it is possible using a novel construction.

First, for each column regex \(C_i\) construct a guard automaton \(O^i \circ W^*\) which is in an accepting state at every position in the row-major string that belongs to column \(i\). Here, \(O\) (for offset) is the automaton corresponding to the regex .. The guard automaton is a repetition of the width automaton \(W\), because every \(w\)-th position in the row-major string is in the same column, prefixed by an offset automaton \(O^i\) to start the repetition from the corresponding column. This automaton corresponds to the regex .{i}(.{w})*.

Then, the column regex \(C_i\) construct a column automaton \(C_i'\) as follows:

\[C_i' = C_i \triangleleft (O^i \circ W^*),\] where \(A \triangleleft G\) is a special product-like guarded automaton (\(A\) guarded by \(G\)), where \(A\) transitions only if \(G\) is in an accepting state. In general \(A \triangleleft G\) is defined as:

- Its states \((a, g)\) are from the product set of states \(A \times G\).

- Its initial state consists of the initial states of \(A\) and \(G\).

- Its state \((a, g)\) is accepting if \(a\) is an accepting state of \(A\).

- In state \((a, g)\) with input character \(c\) the automaton steps to

- \((a', g')\) if \(g\) is an accepting state of \(G\), \(A\) steps from \(a\) to \(a'\) with \(c\) and \(G\) steps from \(g\) to \(g'\) with \(c\),

- \((a, g')\) if \(g\) is not an accepting state of \(G\) and \(G\) steps from \(g\) to \(g'\) with \(c\).

(This has some similarities to stretching of automata.)

Example

For the above example, the automata are the following.

- \(C_0\) is the automaton corresponding to the regex

UB|IE|AW: graph LR

start:::hidden

c0(($$c_0$$))

c1(($$c_1$$))

c2(($$c_2$$))

c3(($$c_3$$))

c4((($$c_4$$)))

start-->c0-->|U|c1-->|B|c4

c0-->|I|c2-->|E|c4

c0-->|A|c3-->|W|c4

classDef hidden display: none;

\(W^*\) is the guard automaton corresponding to the regex (.{3})*:

graph LR

start:::hidden

w0((($$w_0$$)))

w1(($$w_1$$))

w2(($$w_2$$))

start-->w0-->|.|w1-->|.|w2-->|.|w0

classDef hidden display: none;

\(C_0' = C_0 \triangleleft W^*\) is the column automaton (which happens to correspond to the regex (U..B|I..E|A..W)..):

graph LR

start:::hidden

c0w0(($$c_0,w_0$$))

c1w1(($$c_1,w_1$$))

c1w2(($$c_1,w_2$$))

c1w0(($$c_1,w_0$$))

c4w1((($$c_4,w_1$$)))

c2w1(($$c_2,w_1$$))

c2w2(($$c_2,w_2$$))

c2w0(($$c_2,w_0$$))

c3w1(($$c_3,w_1$$))

c3w2(($$c_3,w_2$$))

c3w0(($$c_3,w_0$$))

c4w2((($$c_4,w_2$$)))

c4w0((($$c_4,w_0$$)))

start-->c0w0-->|U|c1w1-->|.|c1w2-->|.|c1w0-->|B|c4w1

c0w0-->|I|c2w1-->|.|c2w2-->|.|c2w0-->|E|c4w1

c0w0-->|A|c3w1-->|.|c3w2-->|.|c3w0-->|W|c4w1

c4w1-->|.|c4w2-->|.|c4w0

classDef hidden display: none;

- \(C_1\) is the automaton corresponding to the regex

[TUBE]*: graph LR

start:::hidden

c0((($$c_0$$)))

start-->c0-->|"[TUBE]"|c0

classDef hidden display: none;

\(O^1 \circ W^*\) is the guard automaton corresponding to the regex .(.{3})*:

graph LR

start:::hidden

o0(($$o_0$$))

w0((($$w_0$$)))

w1(($$w_1$$))

w2(($$w_2$$))

start-->o0-->|.|w0-->|.|w1-->|.|w2-->|.|w0

classDef hidden display: none;

\(C_1' = C_1 \triangleleft (O^1 \circ W^*)\) is the column automaton (which happens to correspond to the regex .(([TUBE]..)*([TUBE].?)?)? – this is uglier than the automaton):

graph LR

start:::hidden

c0o0((($$c_0,o_0$$)))

c0w0((($$c_0,w_0$$)))

c0w1((($$c_0,w_1$$)))

c0w2((($$c_0,w_2$$)))

start-->c0o0-->|.|c0w0-->|"[TUBE]"|c0w1-->|.|c0w2-->|.|c0w0

classDef hidden display: none;

(Note that although all states shown are accepting, \(C_1'\) does not accept all strings – the dead state for mismatches at [TUBE] is not shown.)

- \(C_2\) is the automaton corresponding to the regex

[BORF].: graph LR

start:::hidden

c0(($$c_0$$))

c1(($$c_1$$))

c2((($$c_2$$)))

start-->c0-->|"[BORF]"|c1-->|.|c2

classDef hidden display: none;

\(O^2 \circ W^*\) is the guard automaton corresponding to the regex ..(.{3})*:

graph LR

start:::hidden

o0(($$o_0$$))

o1(($$o_1$$))

w0((($$w_0$$)))

w1(($$w_1$$))

w2(($$w_2$$))

start-->o0-->|.|o1-->|.|w0-->|.|w1-->|.|w2-->|.|w0

classDef hidden display: none;

\(C_2' = C_2 \triangleleft (O^2 \circ W^*)\) is the column automaton (which happens to correspond to the regex ..[BORF]...):

graph LR

start:::hidden

c0o0(($$c_0,o_0$$))

c0o1(($$c_0,o_1$$))

c0w0(($$c_0,w_0$$))

c1w1(($$c_1,w_1$$))

c1w2(($$c_1,w_2$$))

c1w0(($$c_1,w_0$$))

c2w1((($$c_2,w_1$$)))

start-->c0o0-->|.|c0o1-->|.|c0w0-->|"[BORF]"|c1w1-->|.|c1w2-->|.|c1w0-->|.|c2w1

classDef hidden display: none;

Solution

Finally, construct the solution automaton \(S\) as an intersection of all row and column automata:

\[S = \left(\bigcap_{i=0}^{h-1} R_i'\right) \cap \left(\bigcap_{i=0}^{w-1} C_i'\right).\] This automaton describes all solutions to the regex crossword. If the regex crossword has a unique solution, this automaton is linear and describes exactly one accepted string.

Example

For the above example, the solution automaton is \(S = R_0' \cap R_1' \cap C_0' \cap C_1' \cap C_2'\) (which happens to correspond to the regex ATOWEL):

graph LR

start:::hidden

q0(( ))

q1(( ))

q2(( ))

q3(( ))

q4(( ))

q5(( ))

q6((( )))

start-->q0-->|A|q1-->|T|q2-->|O|q3-->|W|q4-->|E|q5-->|L|q6

classDef hidden display: none;

The solution to the regex crossword can be read off the automaton: the only accepted string is ATOWEL.

Although, I have implemented it in Java, I don’t have useful information about its performance (especially compared to other approaches). The runtimes on small test cases are neglible.

This approach involves a lot of product automata constructions (for intersections and guarded) which, at least in theory, can yield quite large automata. My hunch is that the intermediate automata, when minimized, are relatively small compared to the theoretical bounds (as also seen in the example). Conventionally regex crosswords have unique solutions, so as more automata are intersected more restrictions are combined, converging towards a smaller language with a smaller automaton.

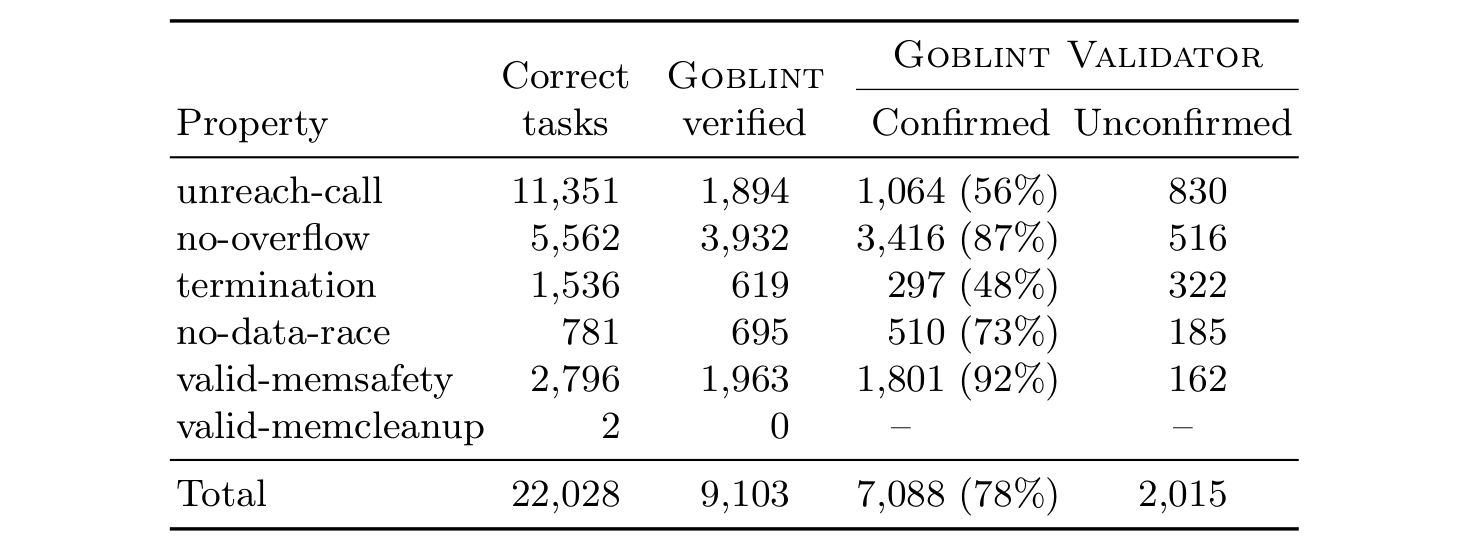

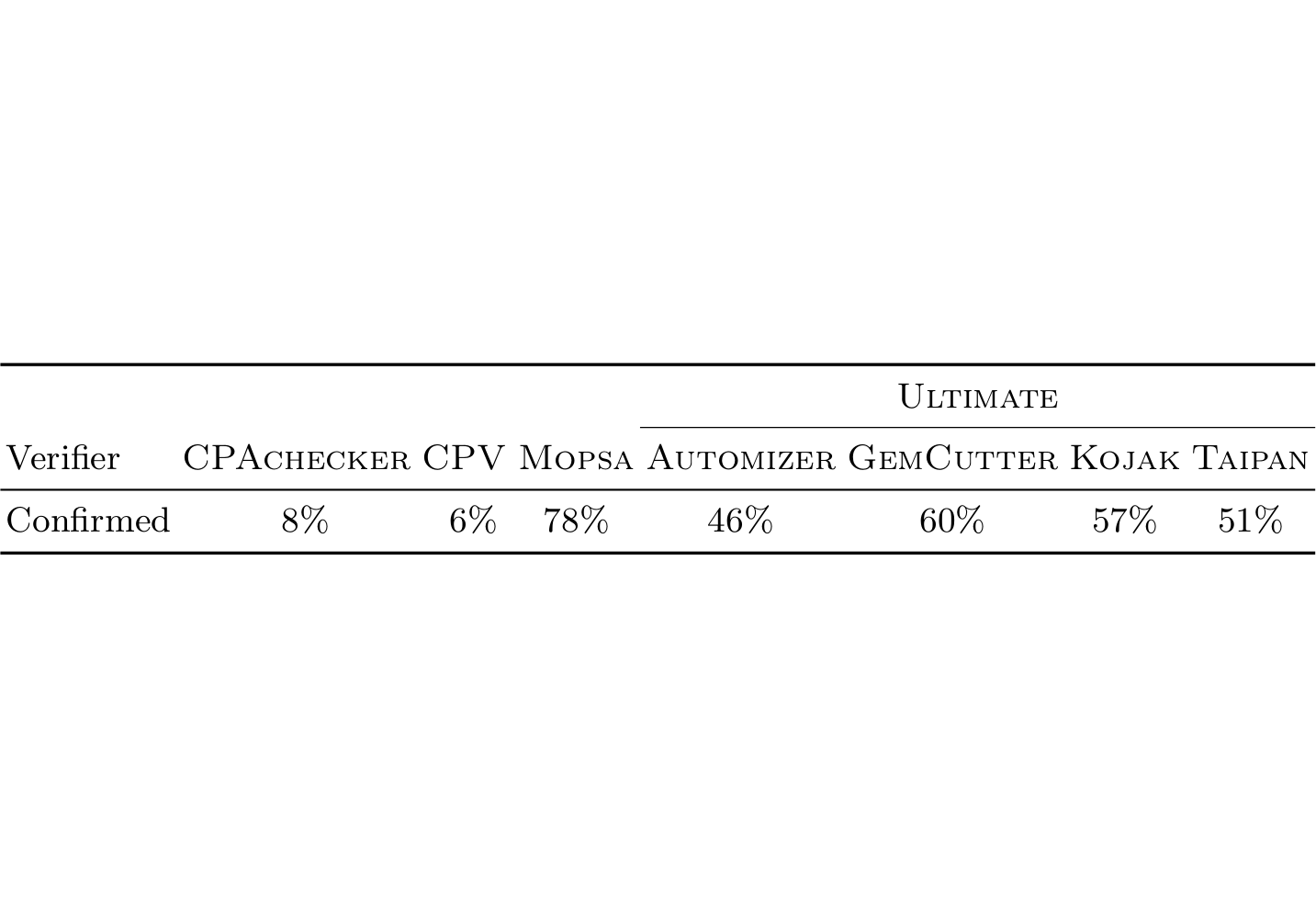

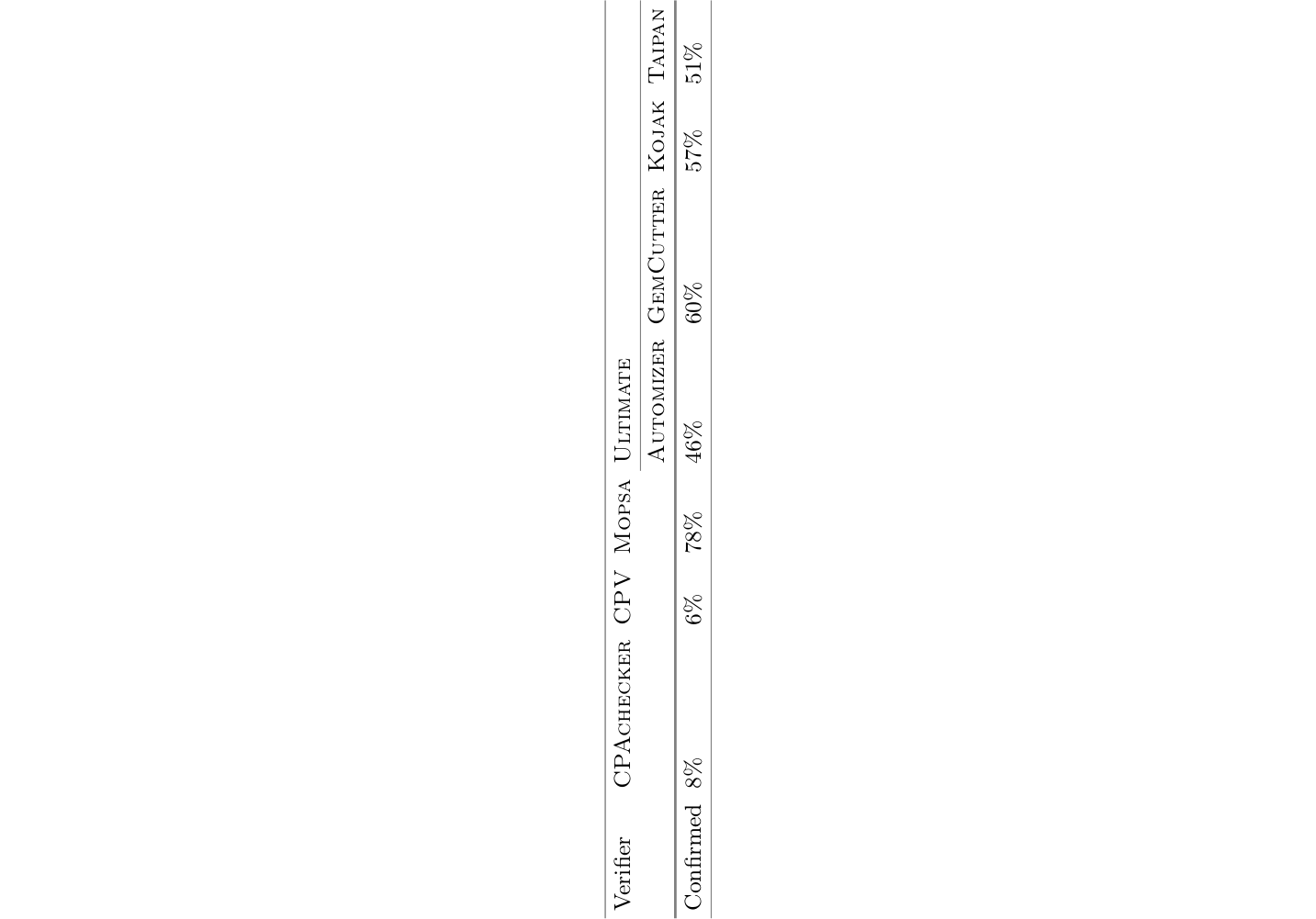

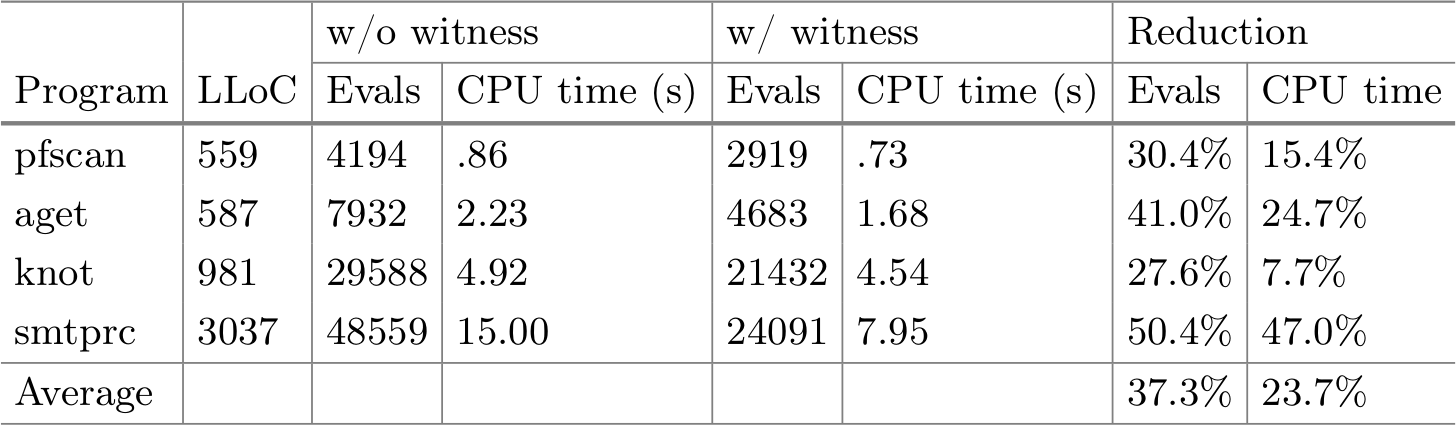

The following table gives an overview of various approaches to the regex crossword problem. Most seem to use more brute force (backtracking, search, SMT), but also target non-regular regexes which cannot be expressed as finite automata.

]]>